The Future of Intelligent Technology

Technology keeps getting better and better, not simply at a steady rate, but exponentially. With any exponential increase, there are really only two possibilities: it will either level off, or explode. That explosion could result in the destruction of everything we know, for worse, or (depending on your point of view) for better.

Futurists such as Ray Kurzweil look forward to this explosion or, at the very least, consider it an inevitable step in human evolution.

Futurists such as Ray Kurzweil look forward to this explosion or, at the very least, consider it an inevitable step in human evolution.

For decades, Kurzweil wrote about the ultimate effects of exponential technological advances. His latest work, The Singularity Is Near: When Humans Transcend Biology, brings together Kurzweil’s investigations and theories to foresee a day in which there will be no distinction between man and machine and that day is much sooner than you might think.

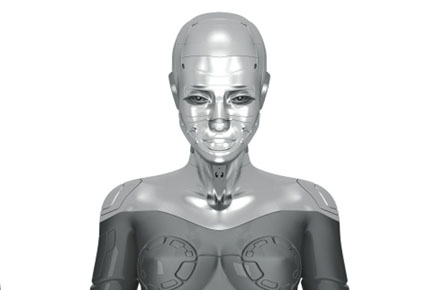

In the field of artificial intelligence, there are a few rough milestones, including the ability of AI to make independent decisions, to learn from experience, to resemble human intelligence (the Turing Test), to accurately model human intelligence and, ultimately, surpass it.

It’s debatable how many of these we’ve already reached, but Kurzweil (via statistical, empirical evidence) places the machines at our level in 2029 and beyond our level by 2045. The final threshold, according to AI theorists (beginning with Erik Mueller in 1987) is the solving of the “AI-complete” problem, or, to coin a phrase, the ‘one small step for man’ that provides the one giant leap for machinekind.

Technological Singularity

As first theorized by Vernor Vinge, one potential (and arguably inevitable) result of accelerated change is singularity. In the same way that exponential gravitational acceleration ultimately results in a black hole, technological acceleration, specifically the increasing sophistication of artificial intelligence, could create an “intelligence explosion.”

A black hole’s gravity is so powerful that light itself is trapped by it, meaning that anyone outside the black hole’s ‘event horizon’ is unable to observe what happens inside of it. A technological singularity is likewise inconceivable, in the sense that our own intelligence is insufficient to predict the results of an intelligence explosion.

Vinge’s paper credited Stan Ulam, who wrote of a talk with John Von Neumann in which the two discussed, “the ever accelerating progress of technology and changes in the mode of human life, which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.”

Moore’s Law

Named after a 1965 assertion by Intel co-founder Gordon E. Moore, is the simplest interpretation is that computer chip power roughly doubles every two years. But after 40 years of surprisingly consistent proof of his theory, Moore added, “it can’t continue forever. The nature of exponentials is that you push them out and eventually disaster happens.”

What is “disaster” in the field of intelligent technology? Well, there’s always the specter of a cybernetic takeover of Terminator (or Neuromancer) proportions, but Moore’s own opinion is that the industry, or simply the Laws of Physics, won’t be able to sustain any more improvements.

Some pessimistic predictions envision events, like stock market crashes, undoubtedly with drastic and far-reaching effects on a technologically dependent economy and society as a whole.

Before or after this happens, we may simply reach a sort of technological plateau. Between insurmountable limitations in those aforementioned Laws of Physics, and especially in the human brain and body, there may come a point at which further advances are too far beyond our capabilities. There’s certainly precedent for such a period in human history, considering times of rapid advancement, like during the Renaissance, followed by periods of stagnation and instability.

However, Kurzweil and others take the wider view of Big History, and offer a third possibility: a disaster or at least an explosion of a very different kind, in a way, a true Rise of the Machines, but with humans rising along with them, and ultimately into each other. In Kurzweil’s words:

“My view is that despite our profound limitations of thought, constrained as we are today to a mere hundred trillion interneuronal connections in our biological brains, we nonetheless have sufficient powers of abstraction to make meaningful statements about the nature of life after the Singularity.

Most importantly, it is my view that the intelligence that will emerge will continue to represent the human civilization, which is already a human-machine civilization. This will be the next step in evolution, the next high level paradigm shift.”

Admittedly, Kurzweil’s evidence and arguments comprise a body of work too encompassing to do more than touch upon here. Along with the concept of accelerating returns and artificial intelligence, another key focus is futurism’s ongoing examination of the efforts of biomedical technology to augment and prolong human longevity.

Still, concentrating on the facet of artificial intelligence drives home the idea that we are rapidly approaching an historical flash point at which the stories of technology and human evolution fuse irrevocably, and begin a monumental and largely unforeseeable new chapter.

About the Author: Greg Buckskin is a life-long sci-fi addict and technology geek. He writes for CableTV.com